SnowEx Database Preview

Contents

SnowEx Database Preview¶

![fireworks] (https://i.gifer.com/FH7W.gif “fireworks”)

Learning Objectives:

First taste of the database!

Code snippets to extract and prep data.

Generate ideas for project pitches.

Set Up Computing Environment¶

# standard imports

import numpy as np

import matplotlib.pyplot as plt

import datetime

import re

import geopandas as gpd

# some mapping widgets

import ipyleaflet

from ipyleaflet import Map, GeoData, Rectangle, basemaps, LayersControl, basemap_to_tiles, TileLayer, SplitMapControl, Polygon

import ipywidgets

#database imports

from snowexsql.db import get_db

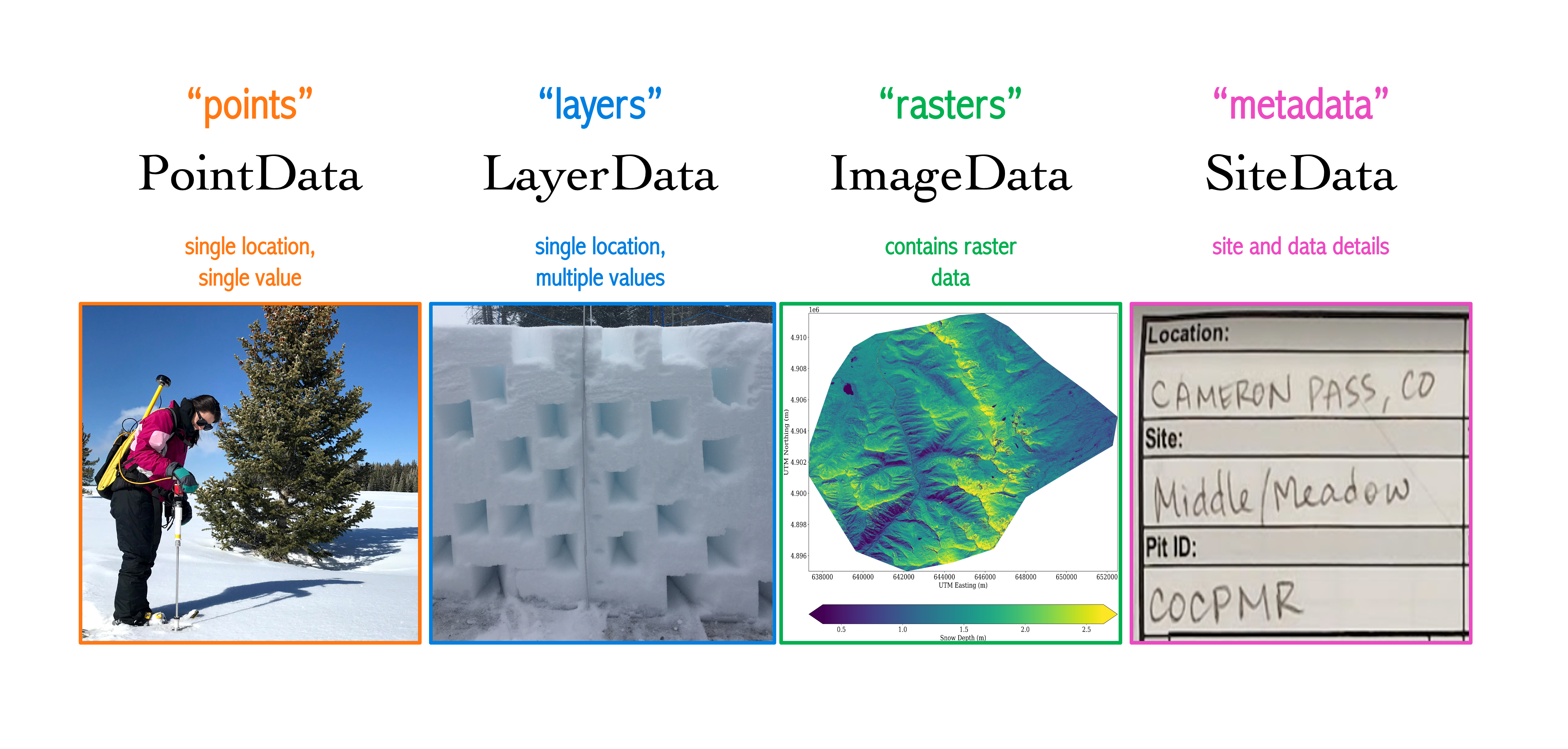

from snowexsql.data import PointData, LayerData, ImageData, SiteData

from snowexsql.conversions import query_to_geopandas, query_to_pandas

POINTER –> Notice where I import the four primary database tables. Can anyone call out what code line does this from the code block above?

# load the database

db_name = 'snow:hackweek@db.snowexdata.org/snowex'

engine, session = get_db(db_name)

print('snowexsql database successfully loaded!')

snowexsql database successfully loaded!

What’s the first thing you might like to do?¶

Find overlapping data for data analysis comparison

Grand Mesa IOP (2020)¶

Example 1: Let’s find all the pits that overlap with an airborne sensor of interest!¶

First, it would be helpful to know, which of the airborne sensors are part of the database, right?

# Query the session using .surveyors() to generate a list

qry = session.query(ImageData.surveyors)

# Locate all that are distinct

airborne_sensors_list = session.query(ImageData.surveyors).distinct().all()

print('list of airborne sensors by "surveyor" name: \n', airborne_sensors_list)

list of airborne sensors by "surveyor" name:

[('USGS',), ('UAVSAR team, JPL',), ('ASO Inc.',)]

1a). Unsure of the flight date, but know which sensor you’d like to overlap with, here’s how:¶

# Airborne sensor from list above

sensor = 'UAVSAR team, JPL'

# Form on the Images table that returns Raster collection dates

qry = session.query(ImageData.date)

# Filter for UAVSAR data

qry = qry.filter(ImageData.surveyors == sensor)

# Grab the unique dates

qry = qry.distinct()

# Execute the query

dates = qry.all()

# Clean up the dates

dates = [d[0] for d in dates]

dlist = [str(d) for d in dates]

dlist = ", ".join(dlist)

print('%s flight dates are: %s' %(sensor, dlist))

# Find all the snow pits done on these days

qry = session.query(SiteData.geom, SiteData.site_id, SiteData.date)

qry = qry.filter(SiteData.date.in_(dates))

# Return a geopandas df

df = query_to_geopandas(qry, engine)

# View the returned pandas dataframe!

print(df.head())

# Close your session to avoid hanging transactions

session.close()

UAVSAR team, JPL flight dates are: 2020-01-31, 2020-02-12

geom site_id date

0 POINT (742453.000 4325752.000) 1C14 2020-01-31

1 POINT (744396.000 4323540.000) 8C26 2020-01-31

2 POINT (740652.000 4327445.000) 2C2 2020-01-31

3 POINT (741580.000 4326713.000) 2C9 2020-01-31

4 POINT (745340.000 4322754.000) 8S28 2020-01-31

1b).Want to select an exact flight date match? Here’s how:¶

# Pick a day from the list of dates

dt = dates[0]

# Find all the snow pits done on these days

qry = session.query(SiteData.geom, SiteData.site_id, SiteData.date)

qry = qry.filter(SiteData.date == dt)

# Return a geopandas df

df_exact = query_to_geopandas(qry, engine)

print('%s pits overlap with %s on %s' %(len(df_exact), sensor, dt))

# View snows pits that align with first UAVSAR date

df_exact.head()

17 pits overlap with UAVSAR team, JPL on 2020-01-31

| geom | site_id | date | |

|---|---|---|---|

| 0 | POINT (742453.000 4325752.000) | 1C14 | 2020-01-31 |

| 1 | POINT (744396.000 4323540.000) | 8C26 | 2020-01-31 |

| 2 | POINT (740652.000 4327445.000) | 2C2 | 2020-01-31 |

| 3 | POINT (741580.000 4326713.000) | 2C9 | 2020-01-31 |

| 4 | POINT (745340.000 4322754.000) | 8S28 | 2020-01-31 |

1c). Want to select a range of dates near the flight date? Here’s how:¶

# Form a date range to query on either side of our chosen day

date_range = [dt + i * datetime.timedelta(days=1) for i in [-1, 0, 1]]

# Find all the snow pits done on these days

qry = session.query(SiteData.geom, SiteData.site_id, SiteData.date)

qry = qry.filter(SiteData.date.in_(date_range))

# Return a geopandas df

df_range = query_to_geopandas(qry, engine)

# Clean up dates (for print statement only)

dlist = [str(d) for d in date_range]

dlist = ", ".join(dlist)

print('%s pits overlap with %s on %s' %(len(df_range), sensor, dlist))

# View snow pits that are +/- 1 day of the first UAVSAR flight date

df_range.sample(10)

47 pits overlap with UAVSAR team, JPL on 2020-01-30, 2020-01-31, 2020-02-01

| geom | site_id | date | |

|---|---|---|---|

| 10 | POINT (741132.000 4327061.000) | 2C6 | 2020-01-31 |

| 44 | POINT (743109.000 4322924.000) | 2S11 | 2020-02-01 |

| 46 | POINT (746303.000 4322571.000) | 9S40 | 2020-02-01 |

| 0 | POINT (742453.000 4325752.000) | 1C14 | 2020-01-31 |

| 32 | POINT (745598.000 4323988.000) | 5N41 | 2020-01-30 |

| 16 | POINT (741960.000 4326644.000) | 6C10 | 2020-01-31 |

| 11 | POINT (744757.000 4323667.000) | 6C24 | 2020-01-31 |

| 41 | POINT (746546.000 4324066.000) | 8N45 | 2020-02-01 |

| 28 | POINT (743402.000 4324736.000) | 5C20 | 2020-01-30 |

| 6 | POINT (740839.000 4327345.000) | 2C4 | 2020-01-31 |

1d). Have a known date that you wish to select data for, here’s how:¶

# Find all the data that was collected on 2-12-2020

dt = datetime.date(2020, 2, 12)

#--------------- Point Data -----------------------------------

# Grab all Point data instruments from our date

point_instruments = session.query(PointData.instrument).filter(PointData.date == dt).distinct().all()

point_type = session.query(PointData.type).filter(PointData.date == dt).distinct().all()

# Clean up point data (i.e. remove tuple)

point_instruments = [p[0] for p in point_instruments]

point_instruments = ", ".join(point_instruments)

point_type = [p[0] for p in point_type]

point_type = ", ".join(point_type)

print('Point data on %s are: %s, with the following list of parameters: %s' %(str(dt), point_instruments, point_type))

#--------------- Layer Data -----------------------------------

# Grab all Layer data instruments from our date

layer_instruments = session.query(LayerData.instrument).filter(LayerData.date == dt).distinct().all()

layer_type = session.query(LayerData.type).filter(LayerData.date == dt).distinct().all()

# Clean up layer data

layer_instruments = [l[0] for l in layer_instruments if l[0] is not None]

layer_instruments = ", ".join(layer_instruments)

layer_type = [l[0] for l in layer_type]

layer_type = ", ".join(layer_type)

print('\nLayer Data on %s are: %s, with the following list of parameters: %s' %(str(dt), layer_instruments, layer_type))

#--------------- Image Data -----------------------------------

# Grab all Image data instruments from our date

image_instruments = session.query(ImageData.instrument).filter(ImageData.date == dt).distinct().all()

image_type = session.query(ImageData.type).filter(ImageData.date == dt).distinct().all()

# Clean up image data (i.e. remove tuple)

image_instruments = [i[0] for i in image_instruments]

image_instruments = ", ".join(image_instruments)

image_type = [i[0] for i in image_type]

image_type = ", ".join(image_type)

print('\nImage Data on %s are: %s, with the following list of parameters: %s' %(str(dt), image_instruments, image_type))

Point data on 2020-02-12 are: camera, magnaprobe, pit ruler, with the following list of parameters: depth

Layer Data on 2020-02-12 are: snowmicropen, with the following list of parameters: force, density, grain_size, permittivity, lwc_vol, manual_wetness, grain_type, temperature, hand_hardness

Image Data on 2020-02-12 are: UAVSAR, L-band InSAR, with the following list of parameters: insar correlation, insar interferogram real, insar interferogram imaginary, insar amplitude

Time Series (2020)¶

# Set up database

from snowexsql.db import get_db

from snowexsql.data import PointData, LayerData, ImageData, SiteData

from snowexsql.conversions import query_to_geopandas

# Query temp profiles from 2 sites: Banner Forest, Banner Snotel

# Plot full timeseries of temp profile vs depth in side by side plot

Explore the Spatial Extent of Field Campaign Data¶

bbox = [-125, 49, -102, 31]

west, north, east, south = bbox

bbox_ctr = [0.5*(north+south), 0.5*(west+east)]

m = Map(basemap=basemaps.CartoDB.Positron, center=bbox_ctr, zoom=4)

rectangle = Rectangle(bounds=((south, west), (north, east))) #SW and NE corners of the rectangle (lat, lon)

m.add_layer(rectangle)

m

# more info on available basemaps here: https://ipyleaflet.readthedocs.io/en/latest/map_and_basemaps/basemaps.html

Query the Database to add Spatial Data to Our Map¶

# # database imports

# from snowexsql.db import get_db

# from snowexsql.data import PointData, LayerData, ImageData, SiteData

# from snowexsql.conversions import query_to_geopandas, query_to_pandas

# load the database

db_name = 'snow:hackweek@db.snowexdata.org/snowex'

engine, session = get_db(db_name)

Let’s find out where we have liquid water content (LWC) data¶

Pointer –> LWC data is in the LayerData table, because data at a single location were measured as a profile on the pit wall face (i.e has a vertical dimension)

# query the LayerData for all LWC values

# qry = session.query(LayerData).filter(LayerData.type == 'lwc_vol')

# query the geometry property of PointData

# qry = session.query(LayerData.geom).distinct()

# query the LayerData for all LWC values

qry = session.query(LayerData.longitude, LayerData.latitude).filter(LayerData.type == 'lwc_vol').distinct() #

# # query the geometry property of PointData

# qry = query(LayerData.geom).distinct()

# # limit the number of entries

# qry = qry.limit(2000)

# convert query to geopandas df

df = query_to_pandas(qry, engine)

df = gpd.GeoDataFrame(df, geometry=gpd.points_from_xy(df.longitude, df.latitude))

print(type(df))

# # convert query to geopandas df

# df = query_to_geopandas(qry, engine)

print(df.head())

# how many did we retrieve?

print(f'{len(df.index)} records returned!')

session.close()

<class 'geopandas.geodataframe.GeoDataFrame'>

longitude latitude geometry

0 -108.221437 39.034623 POINT (-108.22144 39.03462)

1 -108.220926 39.036475 POINT (-108.22093 39.03648)

2 -108.220239 39.064288 POINT (-108.22024 39.06429)

3 -108.220220 39.064180 POINT (-108.22022 39.06418)

4 -108.219632 39.034409 POINT (-108.21963 39.03441)

155 records returned!

Let’s add these points to our map!¶

m = Map(basemap=basemaps.CartoDB.Positron, center=bbox_ctr, zoom=4)

# geo_data = GeoData(geo_dataframe = df)

geo_data = GeoData(geo_dataframe = df,

style={'color': 'black', 'radius':8, 'fillColor': '#3366cc', 'opacity':0.5, 'weight':1.9, 'dashArray':'2', 'fillOpacity':0.6},

hover_style={'fillColor': 'red' , 'fillOpacity': 0.2},

point_style={'radius': 5, 'color': 'red', 'fillOpacity': 0.8, 'fillColor': 'blue', 'weight': 3},

name = 'lwc obs.')

m.add_layer(geo_data)

m.add_control(LayersControl())

m

grab geojson from gist¶

import geopandas as gpd

import requests

url = "https://gist.githubusercontent.com/meganmason/21e6de1f7487b3e7defbe60ddde07e0e/raw/06939d8fffc0539a6014ee0d7c0c1d58938d9e8d/pits_raw.geojson"

gdf = gpd.read_file(url)

gdf.head()

| field_1 | Identifier | PitID | Location | Site | Easting | Northing | Zone | Latitude | Longitude | Date | Local/Stan | geometry | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 0 | CAAMCL_20191220_1300 | CAAMCL | American River Basin | Caples Lake | 757216 | 4288787 | 10 | 38.71033 | -120.04187 | 12/20 | 13:00:00 | POINT (-120.04187 38.71033) |

| 1 | 1 | CAAMCL_20200131_1215 | CAAMCL | American River Basin | Caples Lake | 757220 | 4288788 | 10 | 38.71034 | -120.04182 | 1/31 | 12:15:00 | POINT (-120.04182 38.71034) |

| 2 | 2 | CAAMCL_20200214_1200 | CAAMCL | American River Basin | Caples Lake | 757218 | 4288787 | 10 | 38.71033 | -120.04185 | 2/14 | 12:00:00 | POINT (-120.04185 38.71033) |

| 3 | 3 | CAAMCL_20200221_1200 | CAAMCL | American River Basin | Caples Lake | 757217 | 4288780 | 10 | 38.71027 | -120.04186 | 2/21 | 12:00:00 | POINT (-120.04186 38.71027) |

| 4 | 4 | CAAMCL_20200228_1130 | CAAMCL | American River Basin | Caples Lake | 757215 | 4288778 | 10 | 38.71025 | -120.04188 | 2/28 | 11:30:00 | POINT (-120.04188 38.71025) |